Today’s post will help you implement a secure solution using Azure Data Factory and Azure Key Vault.

Are you looking to securely store connection strings, users and passwords for use in Azure Data Factory? Are you still hardcoding the credentials in your Azure Data Factory linked services? This post will answer these questions.

This article is valid for Azure Data Factory as a standalone service and Azure Synapse Analytics Workspaces.

Table of Contents

Why should I use Azure Key Vault when using Azure Data Factory?

To begin, Azure Key Vault can save connection strings, URLs, SSH certificates, users and passwords for you. Therefore, you don’t need to include such sensitive data in Azure Data Factory.

Storing connection strings enhances the deployment of changes across different environments (Dev/Test/UAT/Prod).

You don’t need to change anything in your Azure Data Factory solution to start using a different connection string. Just change the Azure Key Vault service name.

If you are working with multiple environments, best practice would be to have 1 Azure Key Vault per environment.

Azure Key Vault takes your security to the next level for data integration solutions with Azure Data Factory.

When shouldn’t I use Azure Key Vault when using Azure Data Factory?

As a rule of thumb, don’t store any passwords if they are not strictly required. Likewise, keep in mind the limitations of the system that you are working with.

Some examples:

- On-premises – don’t use SQL Server credentials. Use Active Directory service accounts.

- In Azure – don’t use SQL Server credentials. Use Azure managed identities. In my upcoming blog posts, we will explore how to implement managed identities.

- Don’t overcomplicate its usage – For example, storing a database name could be a good idea if the name of the database is different between the environments, but storing table names is not a good idea.

How do Azure Data Factory and Key Vault work?

It’s quite simple. Azure Data Factory accesses any required secret from Azure Key Vault when required.

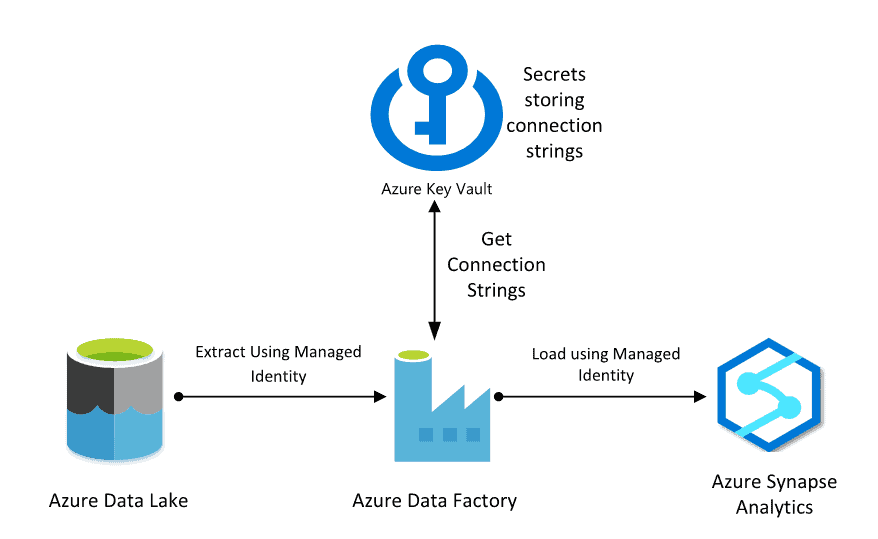

For example, imagine that you need to move information from Azure Data Lake to Azure Synapse Analytics and you want to store the connection strings in Azure Key Vault. The following diagram explains the flow between the environments.

Configure Azure Key Vault

Next, let’s use the previous example for the tutorial. Create the secret using your Azure Key Vault to store the connection string for an Azure Synapse Analytics and Azure Data Lake Linked services. The configuration has 2 main steps:

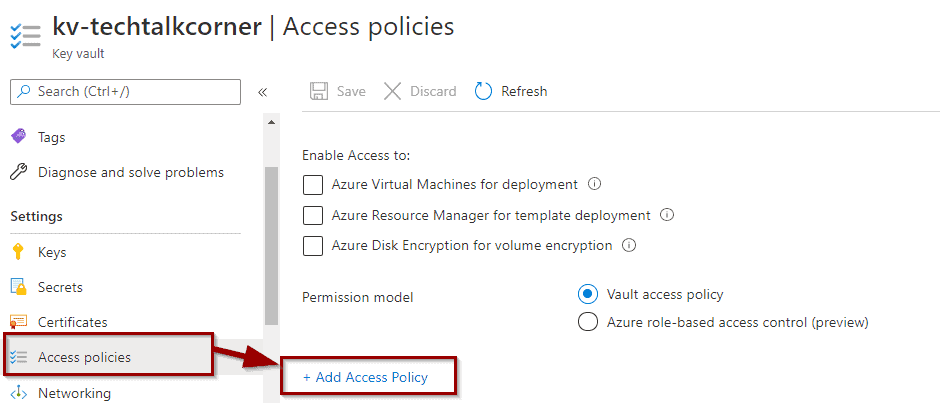

- Grant access to Azure Data Factory to list and get secrets

- Create Azure Key Vault secrets

Grant access to Azure Data Factory to list and get secrets

To being, let’s grant access to your Azure Data Factory.

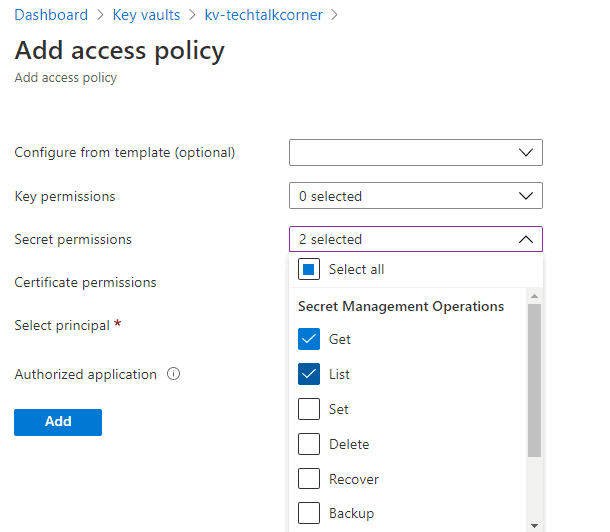

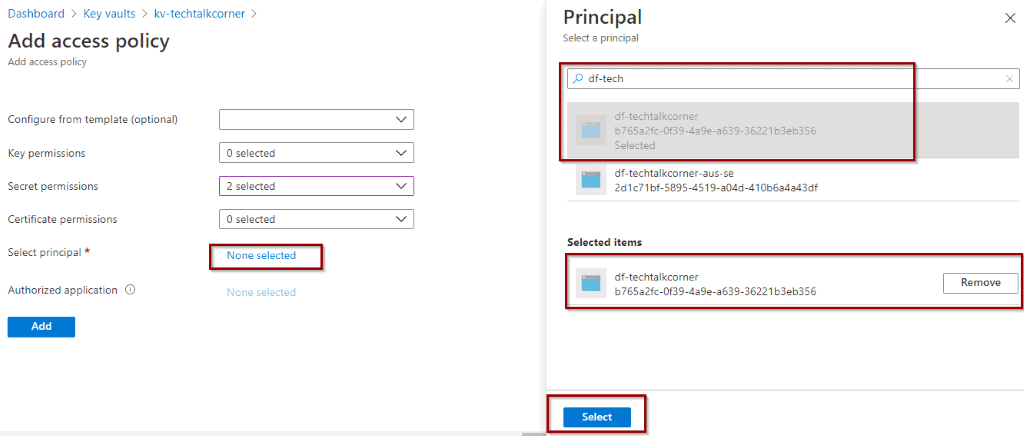

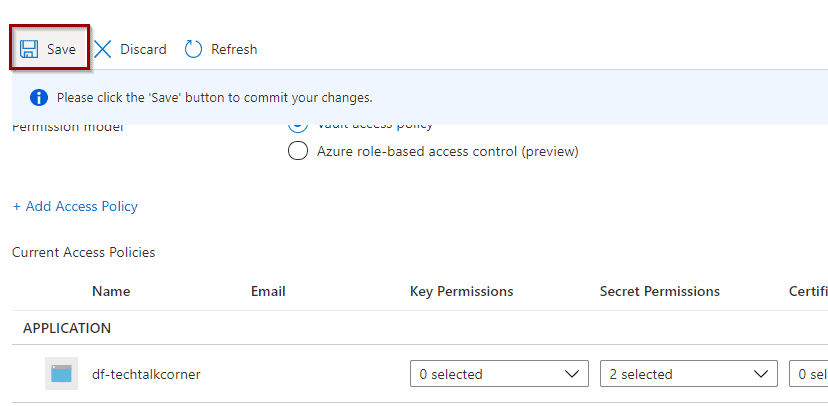

Assign permissions to get and list secrets.

Now it’s time to give access to your Azure Data Factory. The name of the Principal will be the name of your Azure Data Factory service.

Then, click “Add” and “Save.”

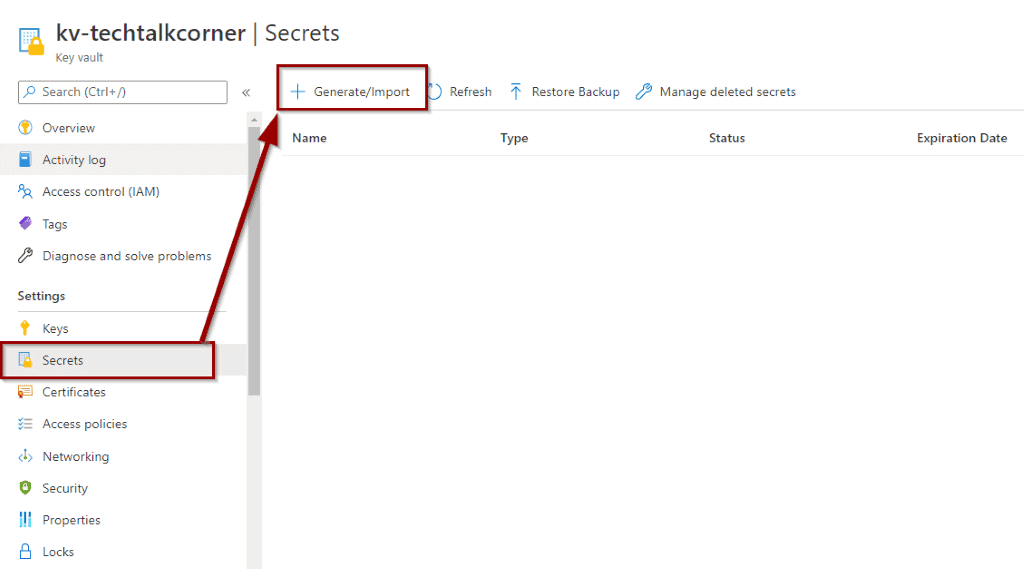

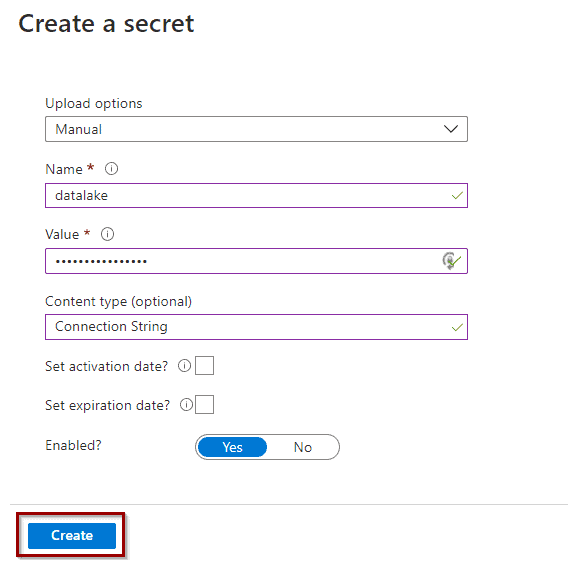

Create Azure Key Vault secrets

The next step is to create secrets.

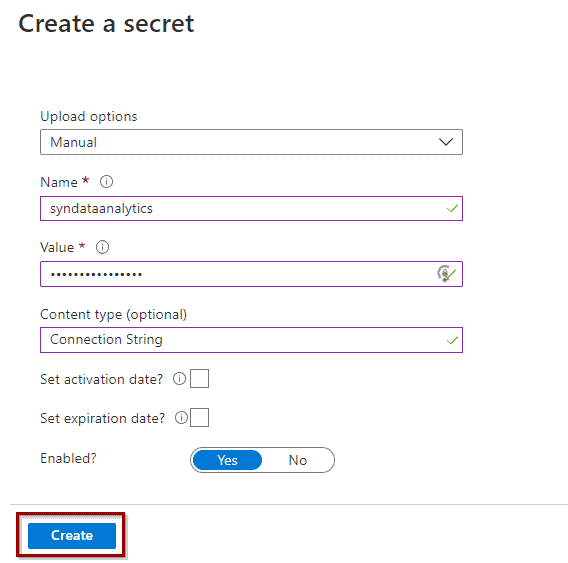

Azure Synapse Analytics

- Connection string value format:

Integrated Security=False;Encrypt=True;Connection Timeout=30;Data Source=servername.database.windows.net;Initial Catalog=databasename

Azure Data Lake

- Connection string value format:

https://storageaccountname.dfs.core.windows.net

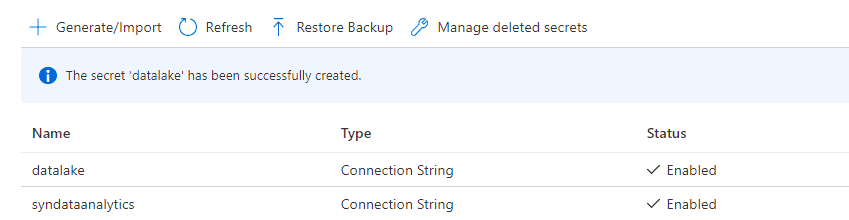

Once the two secrets have been created, they will become available on the list.

Configure Azure Data Factory

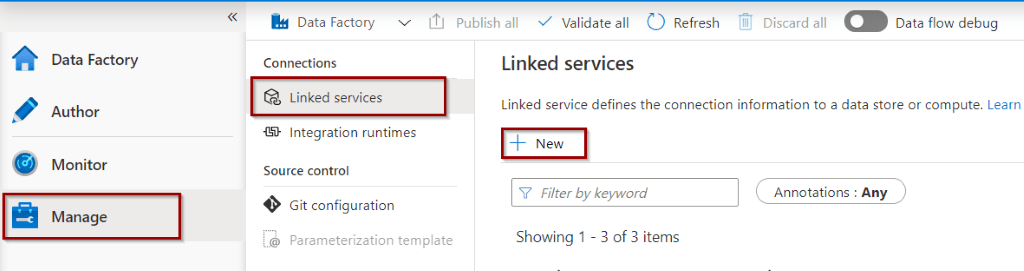

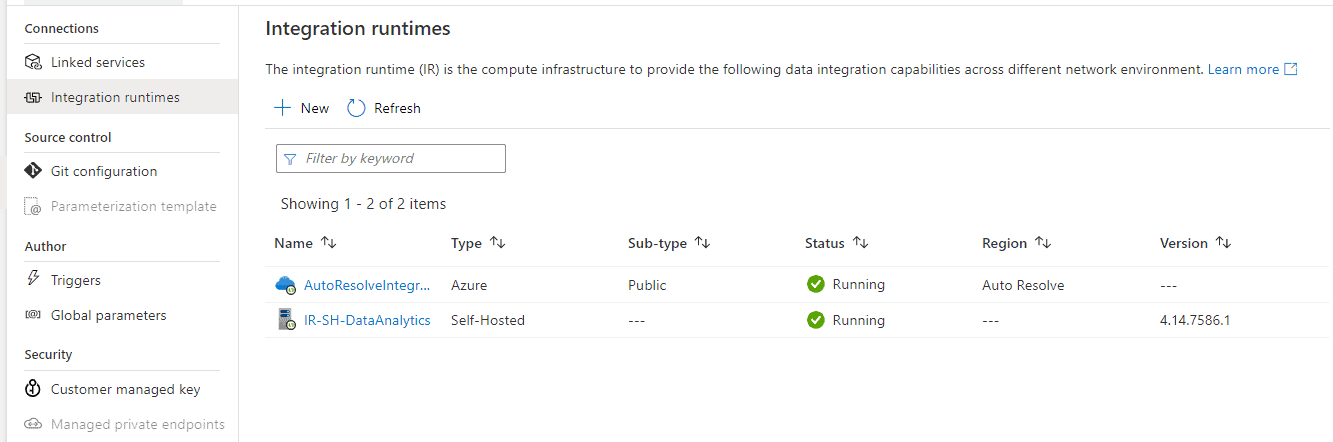

When configuring Azure Data Factory, you need to create a linked service for Azure Key Vault before you can start using it.

Create Azure Key Vault Linked Service

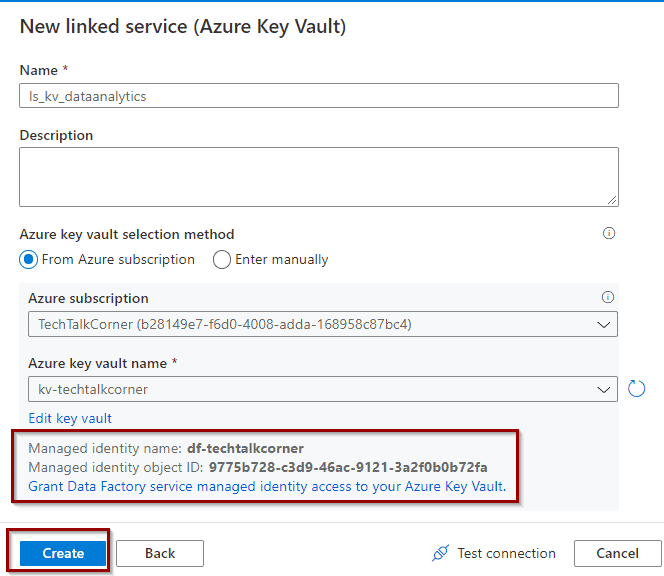

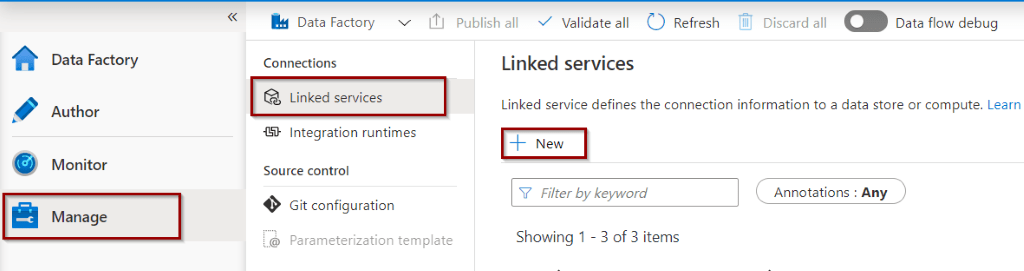

To create a linked service, in your Azure Data Factory, go to Manage->Linked Services->New.

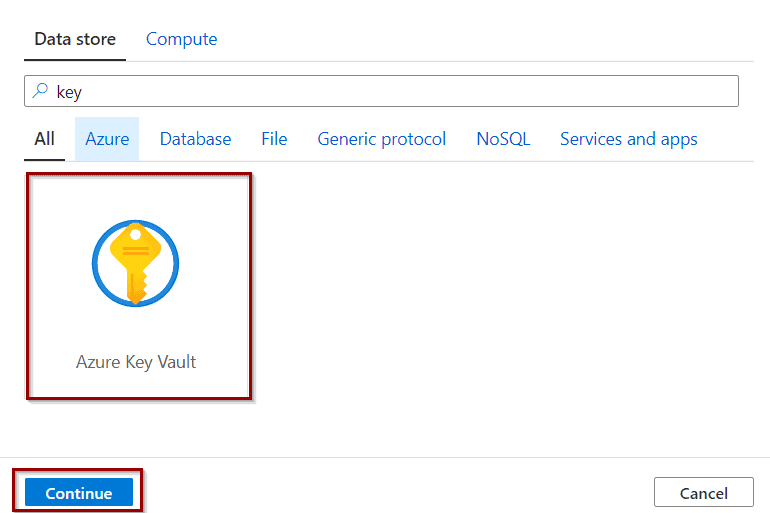

After that, search for Azure Key Vault and click continue.

Now you can select your Azure Key Vault and click create.

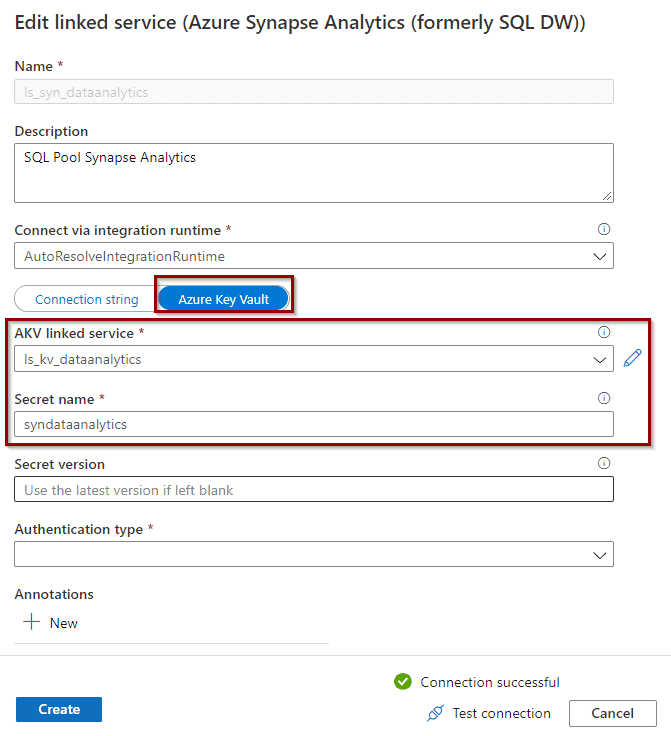

Create Azure Synapse Linked Service

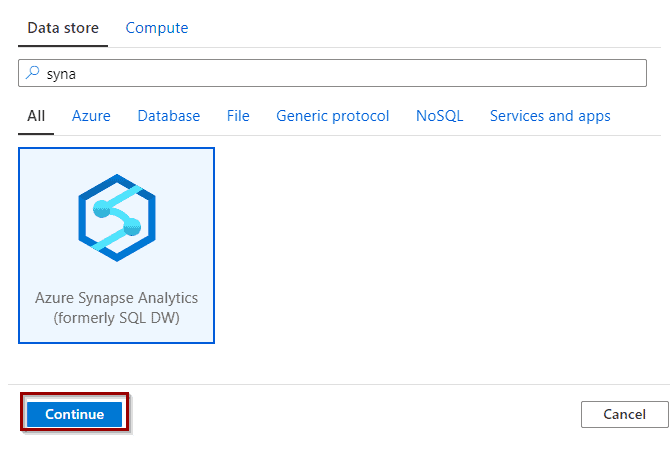

Now that you have Azure Key Vault configured, create the Linked Service for the Data Lake. Search for Azure Synapse and click continue.

Configure it to use the Azure Key Vault and the secret name.

Create Azure Data Lake Linked Service

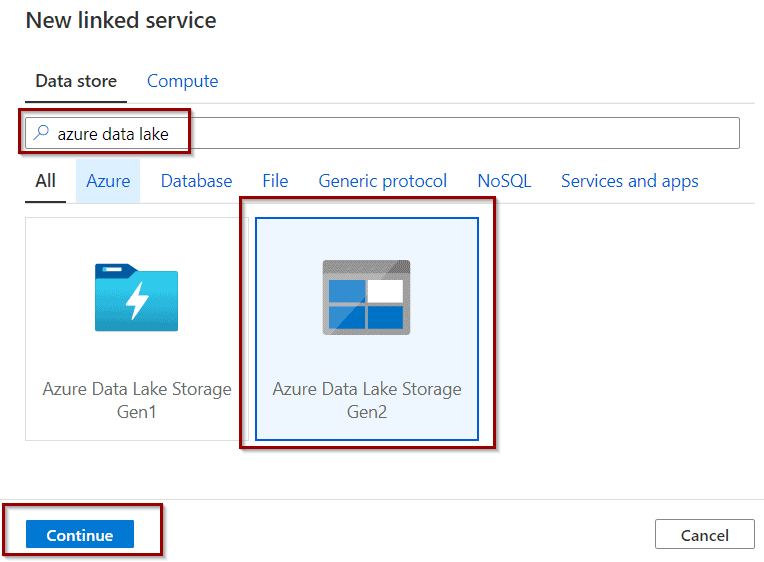

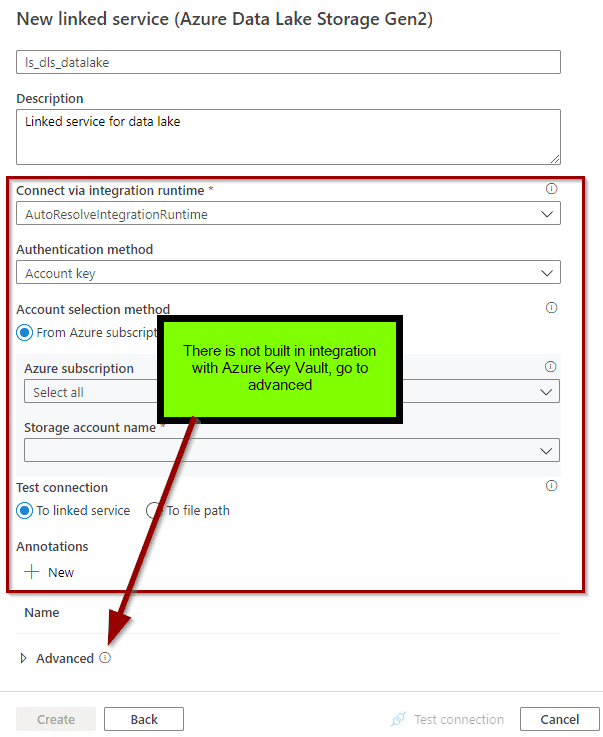

Create the Linked Service for a Data Lake. Search for Azure Data Lake and click continue.

Wait! What? There aren’t any options to use Azure Key Vault. You can still modify the definition of the LS to use it.

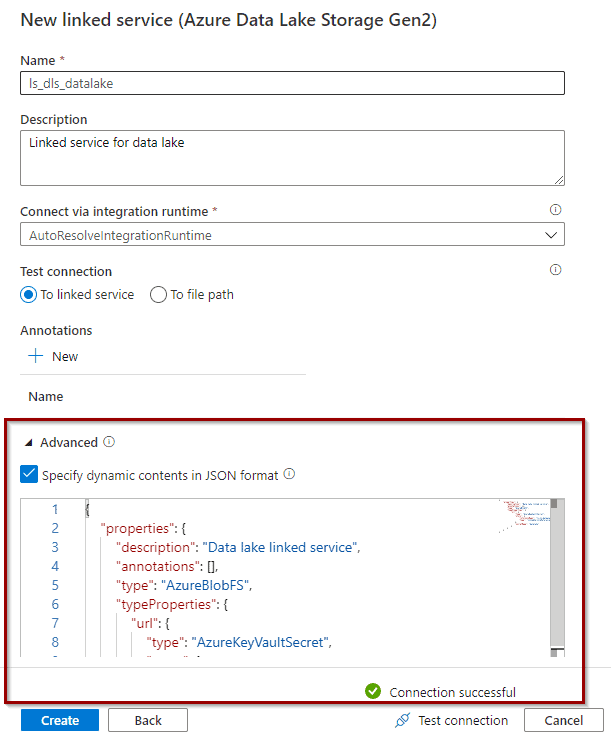

In advanced settings, copy the following code and replace the key vault and secret name.

{

"properties": {

"description": "Data lake linked service",

"annotations": [],

"type": "AzureBlobFS",

"typeProperties": {

"url": {

"type": "AzureKeyVaultSecret",

"store": {

"referenceName": "key vault linked service name here",

"type": "LinkedServiceReference"

},

"secretName": "secret name here"

}

}

}

}

Summary

In this post you’ve learned how to easily integrate Azure Key Vault into your Azure DF solution. Now you’re ready to start creating datasets and pipelines.

Final Thoughts

I haven’t developed an Azure Data Factory solution that does not take advantage of it in one or multiple ways. This belongs to the set of standard practices for using Azure Data Factory and following best development practices.

While this tutorial is just the tip of the iceberg, it is a great starting point.

What’s Next?

In upcoming blog posts, we’ll continue to explore some of the features within Azure Services.

Please follow Tech Talk Corner on Twitter for blog updates, virtual presentations, and more!

As always, please leave any comments or questions below.

1 Response

Darren

21 . 07 . 2022Hi. Thanks for this. I am successfully using Key Vaults for secret management, and my Synapse Workspace can retrieve secrets using the Linked Service to the KV. My question is, can secrets be entered/added into the KV from Synapse Workspace or does this have to be done the Azure Web Portal only? Thanks