Azure Data Factory Global Parameters help you simplify how you develop and maintain your Azure Data Factory solutions. They are constants – a value that cannot be changed during execution, and can be used across your linked services, pipelines, activities, datasets, and more.

Table of Contents

Why and When to Use Azure Data Factory Global Parameters

To begin, I like to think that global parameters are like project parameters in SQL Server Integration Services (SSIS). The idea is to simplify the number of times you need to change the value for a parameter being used across different objects.

In order to understand when to use Azure Data Factory Global Parameters, here are some sample scenarios:

- In a scenario where you are trying to define a constants value, For example:

- Environment

- Email address for custom notification handling

- In a scenario where you’d like to enable or disable specific features across all your pipelines. For example:

- Disable performance metrics analytics

- Data consistency verification

- In a scenario where you want to modify advanced copy activity options (you might have more than 1 parameter per system). For example:

- Write Batch Size

- Max concurrent connections

- Write batch timeout

- Data integration unit (DIU)

- Degree of copy parallelism

- Logging Level

- I highly recommend storing connection strings using Azure Key Vault and Azure Data Factory. However, let’s think of a scenario where you are not using Key Vault to store connection strings and you have a large number of linked services that use the same server, but different databases.

If you are going to migrate the server to different IP, you will need to modify all the linked services with the new connection string. In contrast, if you use a global parameter, you only need to change one value

- Azure Data Factory Global parameters can be added to ARM Templates. You can modify them while deploying to other environments using Azure DevOps pipelines.

I am sure you can find more scenarios where using Azure Data Factory Global Parameters is a great idea. Leave a comment down below if you come up with any other scenarios!

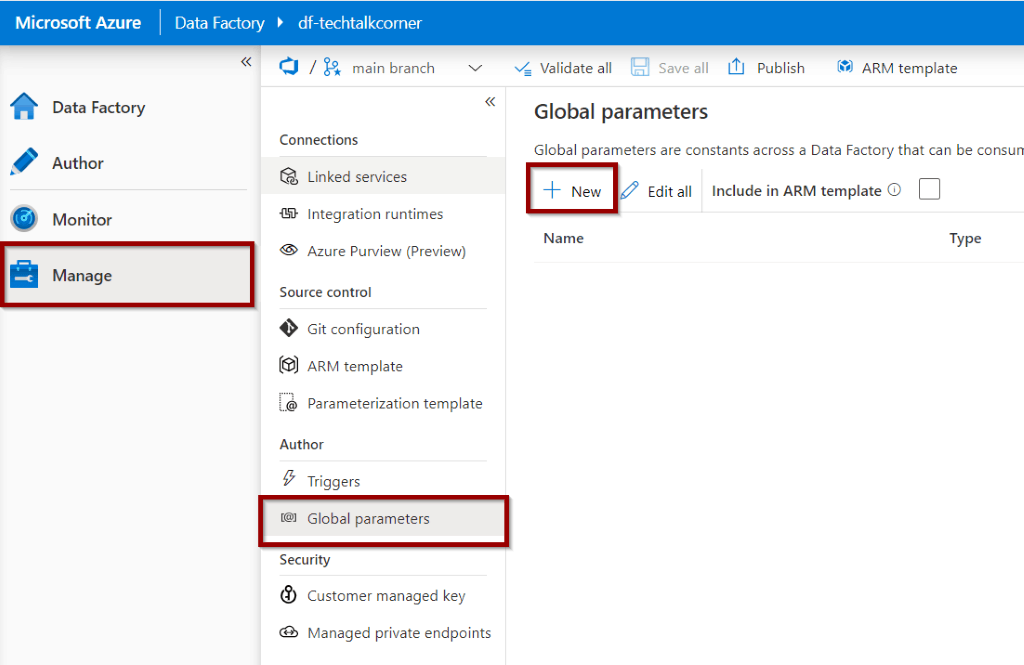

Create Azure Data Factory Global Parameters

First, to create Azure Data Factory Global Parameters, go to the Manage Hub. Click on the Global Parameters Option to create them.

Let’s create two parameters for our sample.

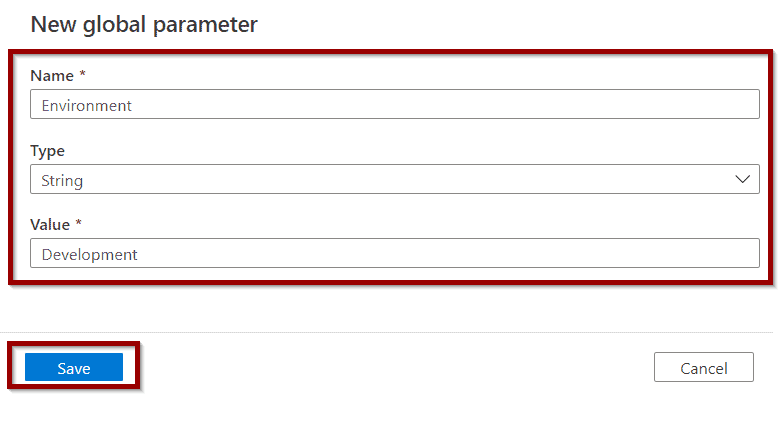

- Environment

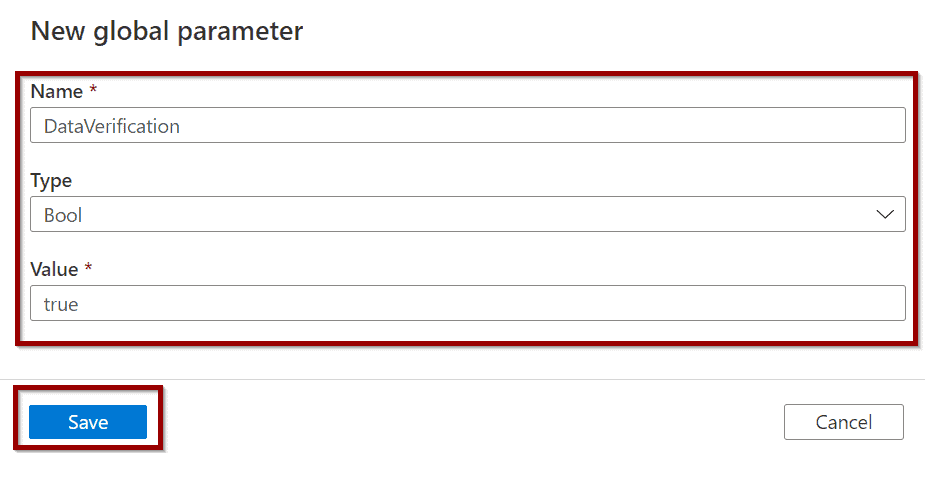

- DataVerification

The first parameter is a constant for Environment:

The second parameter is a constant to enable/disable Data Verification.

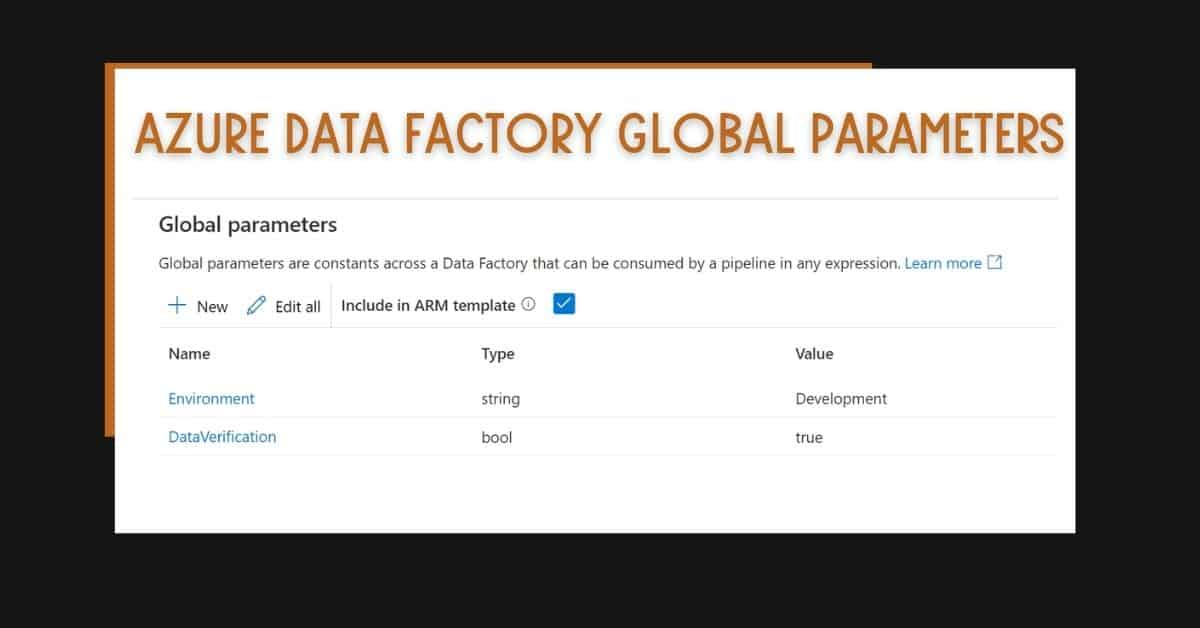

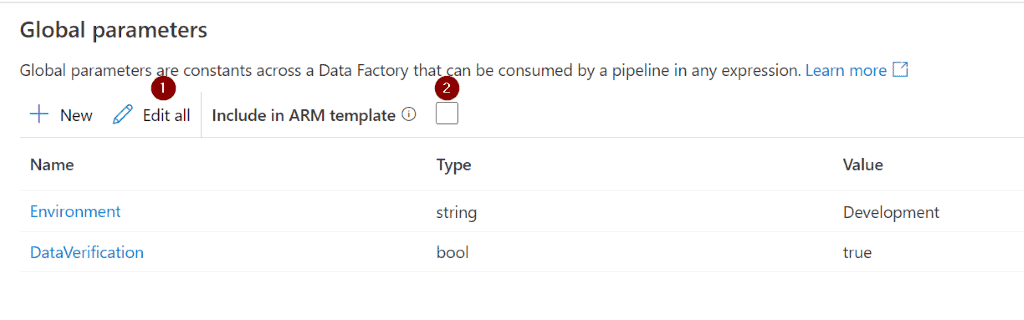

The parameters become available on the list.

You can edit them (1) as well as add them to an ARM Template (2).

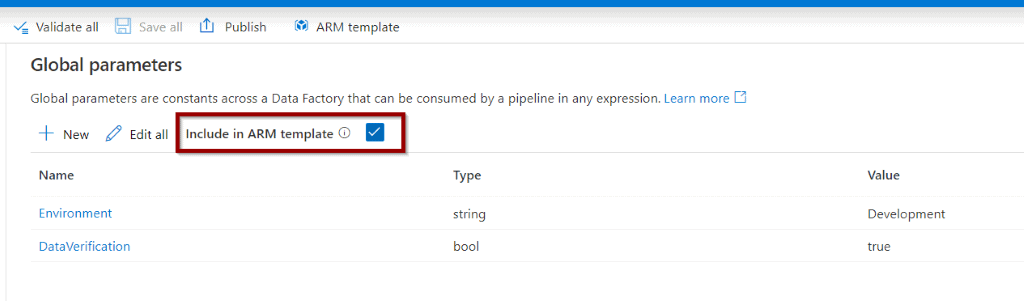

Include Azure Data Factory Global Parameters in ARM Template

If you enable the Include Azure Resource Manager (ARM) template option, it modifies the ARM template and creates an additional folder in your repository. This is after you publish the changes.

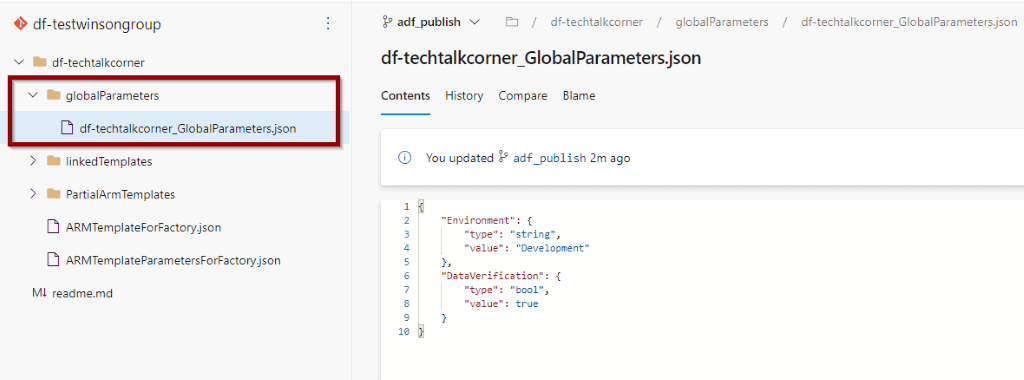

Here you can see the additional folder.

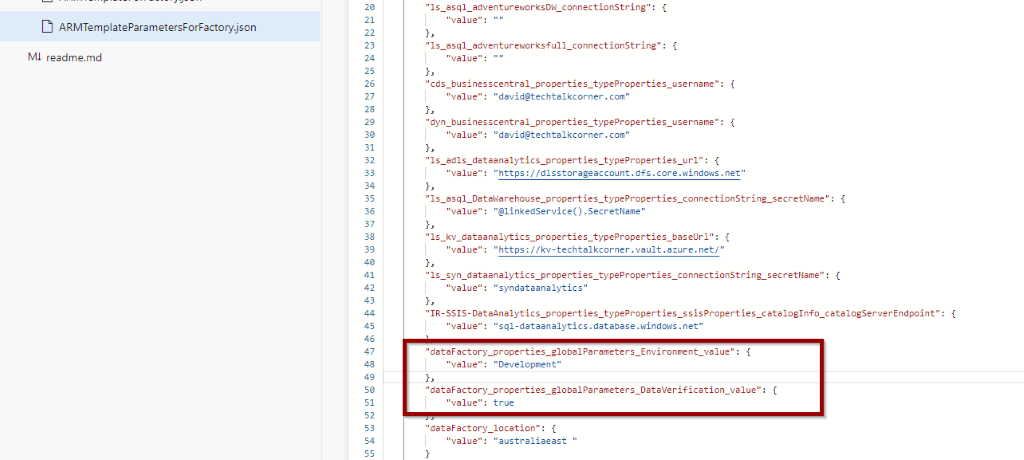

And you can also see how the parameters are being used in the ARM template.

It’s possible to change the value of the global parameters while deploying ARM templates to other environments using Azure DevOps pipelines and CI/CD.

Use Azure Data Factory Global Parameters

You have created some Azure Data Factory Global Parameters, but they are not yet in use. It’s necessary to assign them.

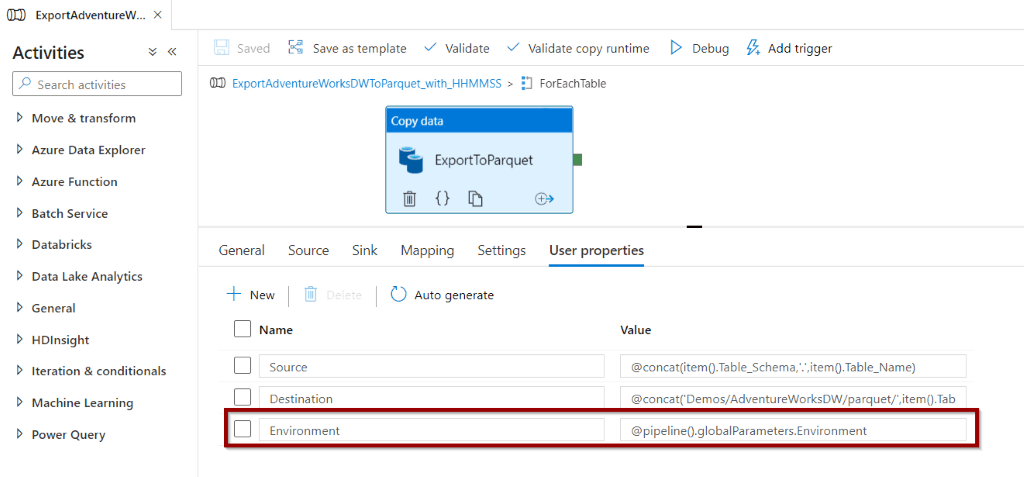

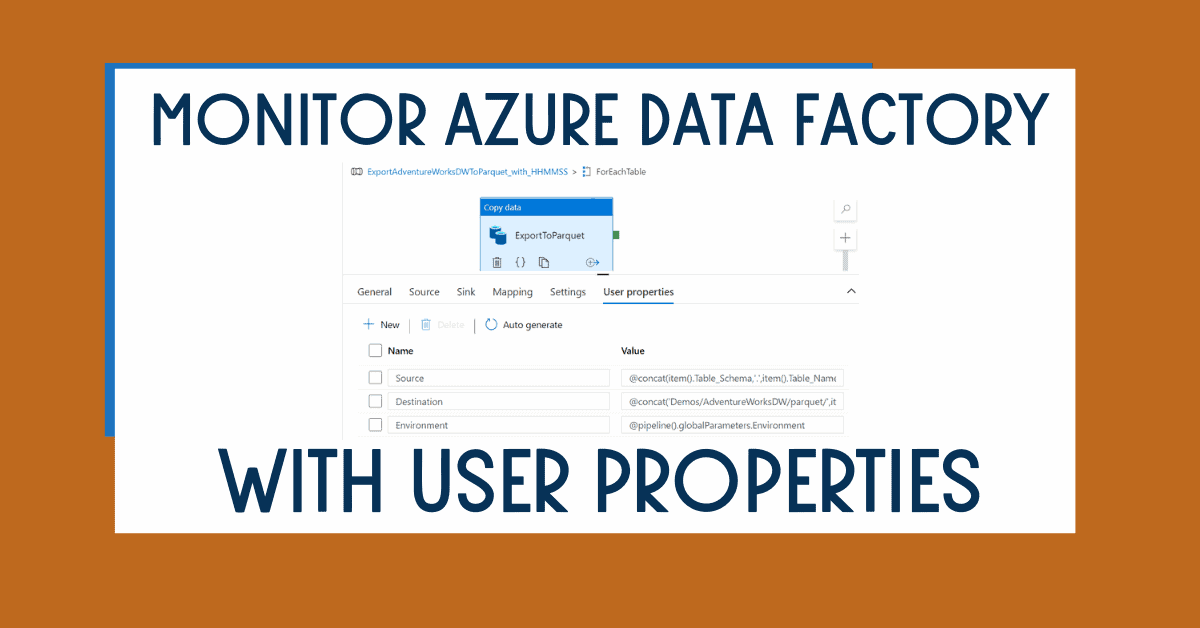

Let’s assign the Environment parameter to one of our linked service user properties to enhance monitoring capabilities.

For user properties, you won’t have the dynamic content option. Just copy and paste “@pipeline().globalParameters.Environment”

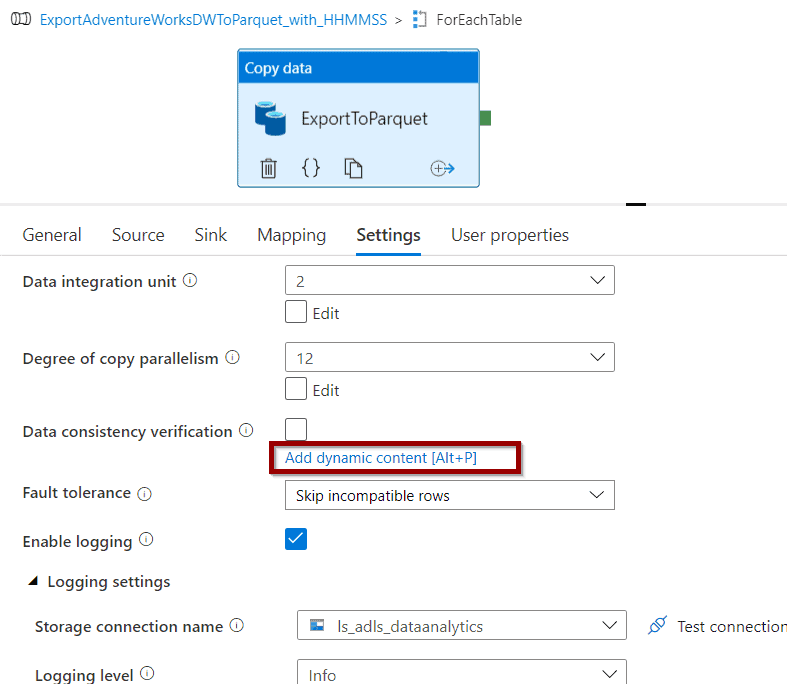

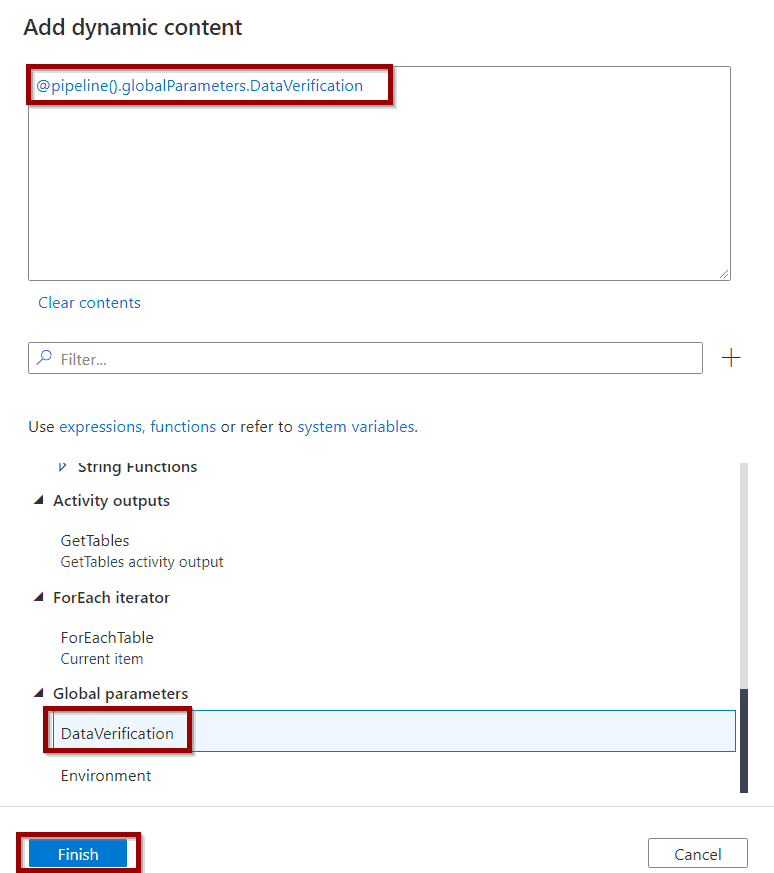

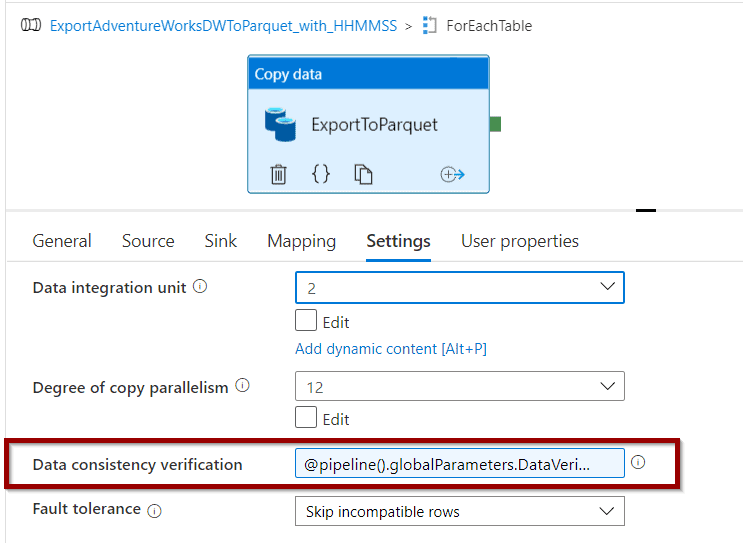

Now, let’s add the second Azure Data Factory Global Parameter to enable data verification. I will use the same copy activity for this.

Select the global parameter and save.

The global parameter is available in the configuration.

That’s all folks! You’ve learned how to use global parameters.

Summary

Today, you learned about Azure Data Factory Global Parameters: how to create and use them to simplify your Azure Data Factory solutions.

What’s Next?

In upcoming blog posts, we’ll continue to explore some of the features within Azure Data Services.

As always, please leave any comments or questions below.

If you haven’t already, you can follow me on Twitter for blog updates, virtual presentations, and more!

2 Responses

Alpa.Buddhabhatti

03 . 03 . 2022Thank you David.

I have used same way . And it works and it generate parametrs in ADF_publish.

However , after deployed it via cicd . when i opne ADF its automatically disconnect Git congig. Also check box for include into ARM will be disabled .

Can you please help me on this ?

Thanks ,

Alpa

David Alzamendi

01 . 04 . 2022Hi Alpa Buddhabhatti,

I was able to reproduce the solution above. Did you make sure your Production solution is not connected to the same repository? Let me know if you still have this issue.

Regards,

David